How is it going? Will we do this again in the same way?

Good practice involves routine data collection to measure

the progress and impact of an activity. Monitoring captures day-to-day activities to gain insights in whether the target audience is engaging in an activity and what is happening to them during the activity. Evaluation measures how well a programme achieves the objectives it set out in Step 2. Don’t forget to allow time and budget for monitoring and research!

This section will outline ideas on M&E activities specific to the C4D activities. M&E attempts to measure progress and results. Monitoring tracks activities and the changes that take place as a result of them so that adjustments can be made to the activity during implementation, if needed. Evaluation at the end of implementation measures how well the programme achieved its objectives. It is very important to allow time and budget for implementation research and monitoring.

Often in C4D strategies, a lot of resources are put into producing high-quality media content. If insufficient resources are reserved for M&E activities, then it will be very difficult to assess whether the activity achieved the desired change and/or impact. However, conversely, if a small amount of funding was spent to produce a video, it is wise to consider how much should be spent on evaluating its long-term impact. Be mindful of relative costs. The below table distinguishes between monitoring and evaluation:

Table 6: Distinguishing between monitoring and evaluation12

| Monitoring | Evaluation |

|---|---|

|

Purpose:

|

Purpose:

|

|

Answers these questions, among others:

|

Answers, for example, these questions:

|

Monitoring is designed to capture data on the day-to-day activities to gain insights about effectiveness (such as likeability and comprehension) of activities and guide the potential modification of activities (based on feedback and recommendations).

Let’s say, for example, a radio talk show is produced for the purpose of increasing awareness of safe migration practices and related resources available in the community. The qualitative monitoring activities of this talk show should involve two-way communication processes to be able to answer the monitoring questions in Table 6 above.

These monitoring activities could include radio listening clubs, two-way SMS communication, focus group discussions, in-depth interviews, etc. Quantitative monitoring, in contrast, tends to involve record-keeping and numerical counts, such as numbers reached, number of radio channels airing the talk show and number of public and private sector organizations engaged, etc.

Evaluation is designed to capture the activity’s longer-term results, e.g. whether the activity has contributed to set higher-level objectives and potential bigger picture societal impact.

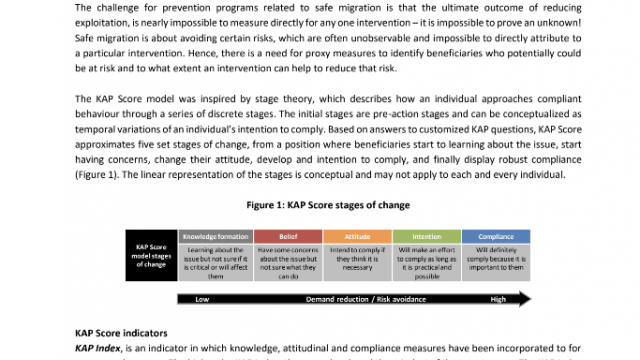

Behaviour change tends to take time and is typically beyond the scope of time-bound programmes. However, within the field of C4D it has long been recognized that, at times, interim social and behavioural change as well as advocacy indicators can act as useful ‘predictors’ of longer-term change.

[BOX blue] CASE STUDY: Unintended Consequences

“The human rights of trafficked persons shall be at the centre of all efforts to prevent and combat trafficking to protect, assist and provide redress to victims.” - S.O.A.P.

It is important to have processes (often in the form of qualitative research) in place to capture unintentional or negative impacts on your target audience. For example, information cards with hotline number can be dangerous for potential victims of trafficking for sexual exploitation to hold because if their traffickers/pimps find this card on them they might be penalized for trying to escape. During large sporting events in the United States, trafficked women allegedly spend a significant amount of time hidden in hotel rooms, completely controlled by their pimps/traffickers and often the only moments they have alone are in the bathroom. Thus, one counter-trafficking organization distributed bars of soap with an imprinted human trafficking hotline number to hotels in those areas to provide a lifeline for these trafficked women and children.

Source: Save Our Adolescents from Prostitution (S.O.A.P.)

[END BOX blue]

Examples of how to evaluate the success of a radio talk show to answer the evaluation questions in Table 6 include the monitoring activities above, as well as: Impact assessments testing the community’s knowledge, attitude and practice (KAP) before and after the communication activities have been conducted.

All information, especially about learnings, collected throughout the monitoring and evaluation processes should help to inform future activities.

[BOX yellow]

TIP: Remember...

“Innovation implies doing something new and different. It means change.

‘New’, ‘different’, ‘change’; three scary words in any organization. These

words imply risk, potential failure and blame. But without risk and failure,

we would not have innovations that make a positive difference.”

- Anthony Lake, UNICEF Director

[END BOX]

Depending on what information is required as well as the time, budget and expertise available, there are different methods to gather information, such as questionnaires, most significant change stories, outcome mapping, etc. To learn more about these different (qualitative and quantitative) methods, please refer to Annex V.

[BOX red]

ENGAGE YOUR AUDIENCE

Participatory M&E is a great way to generate local level ownership of communication strategies, content development and processes. Some principles include:

- Local people are active participants, not just sources of information.

- Stakeholders evaluate, outsiders facilitate.

- Focus on building stakeholder capacity for analysis and problem solving.

Source: Sustainable Sanitation and Water Management (SSWM). Participatory Monitoring and Evaluation.

Available from: http://www.sswm.info/content/participatory-monitoring-and-evaluation.

[END BOX]

- Decided what is to be monitored? How?

- Identified what will be evaluated? How?

- Determined how information will be collected?

- Established the lessons learned for future activities?